Mythos is just the beginning

If you were waiting for a sign that superintelligence is coming, this is it

Claude Mythos Preview is, by every available benchmark, the most capable AI model ever built. But more striking than any benchmark are the thousands of previously unknown zero-day vulnerabilities that Mythos found in every major operating system and every major web browser — many of them critical, several of them decades old. As a result, Anthropic found the model too dangerous to release publicly.

Much digital ink has been spent analyzing Mythos, its cyber abilities, and what that means for our cybersecurity and national security. This is important and needs to be discussed. But the real headline is that Mythos is just the beginning. This kind of thing — finding abilities too dangerous to release — will become the new normal and this will only get more intense as AI companies build towards AI superintelligence.

So what is Claude Mythos, what does it mean, and what should we do? Let’s dig in.

What is Claude Mythos?

Previously, Anthropic had three model sizes — Haiku (small), Sonnet (medium), and Opus (large). Capabilities generally increase as you increase the model size. Mythos is one such increase in size, larger than even Opus in the same way Opus is larger than Sonnet.

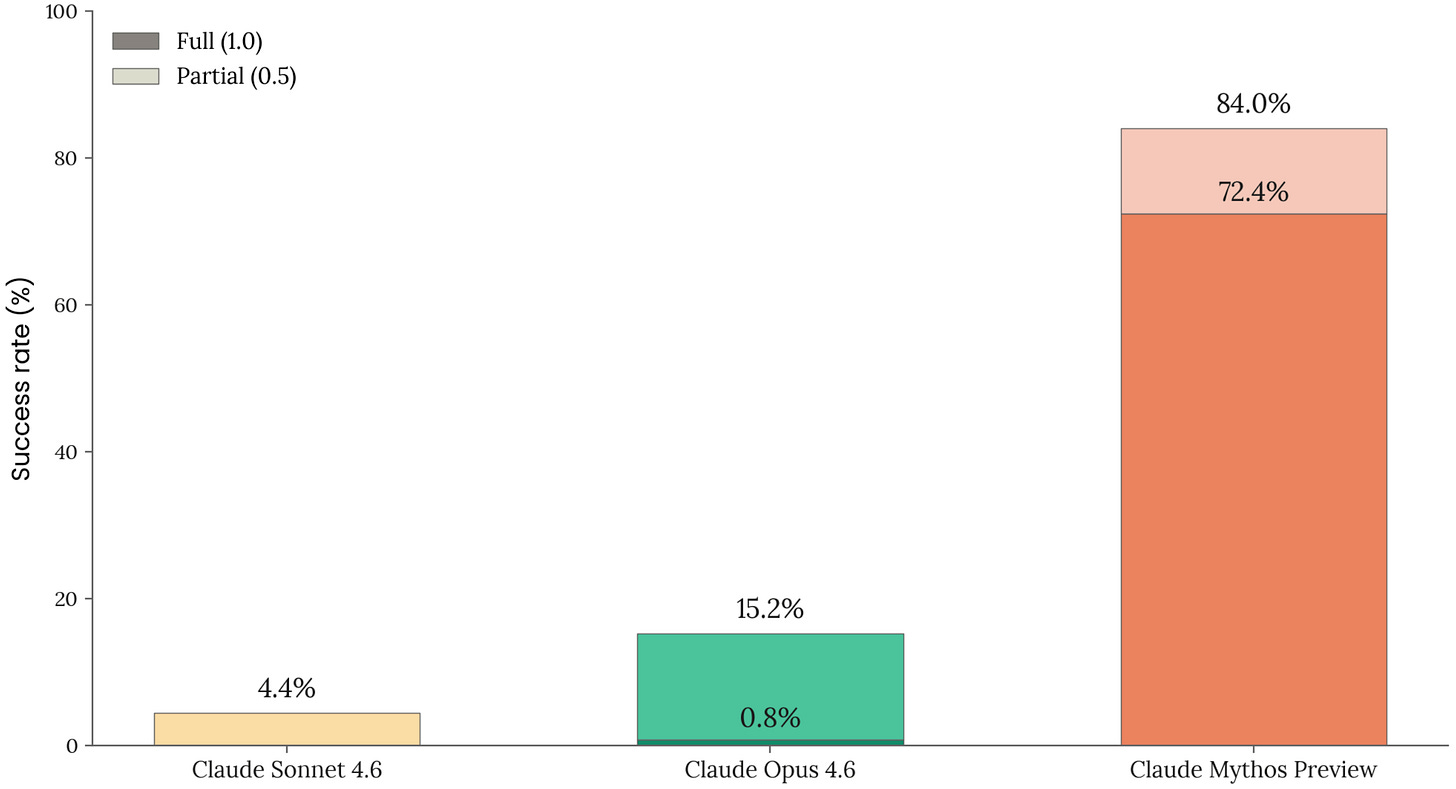

This increase in model scale has given Mythos notably stronger capabilities in a variety of domains — most notably cyberoffense. Yes, earlier models like Opus 4.6 could also do vulnerability discovery, especially with improved inference-time compute and improved scaffolding. This has led some to incorrectly dismiss the Mythos results as mere marketing hype.

But Mythos is plainly on another scale in terms of both quantity of vulnerabilities and typical severity. On a standardized Firefox exploit development task, the previous best model succeeded 2 times out of several hundred attempts. Mythos Preview succeeded 181 times. The UK government found Mythos to significantly outperform Claude Opus 4.6 on their 32-step corporate network attack simulation, with select runs completing all 32 steps.

As a result, Anthropic is drowning in high-severity vulnerabilities, of which fewer than 1% have been fully patched by their maintainers. Nicholas Carlini, a leading AI security researcher and Anthropic employee, said “I’ve found more bugs in the last few weeks with Mythos than in the rest of my entire life combined.”

Where this is going

On August 2, 1939, Einstein sent Roosevelt a letter warning that a nuclear weapon was possible. Six years later, it happened. This nuclear weapon was fully under government control. What would things have been like if the nuclear weapon was instead developed by private companies?

Today, experts similarly warn that AI superintelligence may be possible and would be even more transformative to the structure of global power than the invention of nuclear weapons. Six years later, will it happen? And will it be under our control?

Every time a new model comes out, people focus on what it can do right now and don’t think enough about where the trend line leads. The cybersecurity story for Mythos, as alarming as it is, is not as important as the trend. A year ago, AI could barely hack at all. It wasn’t until around June 2025 that AI was reliably helpful for hacking, and it wasn’t until November that AI could autonomously implement hacks.

Consider that just a few years ago, some AI scientists and top forecasters forecast that AI would be capable of cyberoffense exceeding professional humans. Many people at the time thought this was impossible, or at least far off. But it’s now here, today.

Now consider that these same AI scientists and top forecasters are warning that this is just the beginning — and that the same scaling dynamic that produced Mythos's cyberoffense will produce sharper capabilities across strategy, weapons design, military planning, and more. Of course, being right about the first doesn't automatically mean being right about the second. But the people who called Mythos early were working from a model of how AI progress works, and that model is performing better than its critics'.

If you were waiting for a sign that superintelligence is coming, this is it. If private AI companies succeed in building even stronger capabilities over the next few years, including AI superintelligence, these private companies would greatly exceed the power of the United States government and all world governments combined. Worse, the private companies may not be able to successfully control the AI superintelligence, allowing the superintelligence to become a power center in its own right.

Anthropic already possesses a cyberoffense capability that rivals many nations and the ability to, if desired, cause major damage. It is great that American frontier AI companies like Anthropic have shown restraint with their AI and how they are releasing it. But this restraint shouldn’t earn these companies a blank check. And right now they essentially have one — the status quo for AI is that AI companies determine nearly everything.

Anthropic made every consequential decision in this story. Whether to lock down, what to lock down, when to tell the government, what to share, who gets early access and who doesn’t, how to vet those who get access, what level of risks are acceptable, and what “responsible” means across all of this… What could’ve happened if Anthropic had simply released Mythos publicly, as most AI companies would do with a flagship model? There’s no law against it. Overnight, every intelligence community operation that depends on signals exploitation is potentially compromised.

How confident is Anthropic that model access hasn’t reached adversary states through a downstream partner or a compromised employee at a partner organization? How confident is Anthropic that the model can’t be stolen and then misused by a highly motivated adversary? How confident is Anthropic that Mythos’s capabilities can be contained and how long should we be aiming to contain them? How confident is Anthropic that future AI models might not escape their containment and independently wreak havoc?

What should government policy be when a company produces, among other things, an unparalleled cyberweapon? What if the future release is also capable of building unprecedented bioweapons? What if the release after that risks genuine loss of control for humanity?

What should we do?

The Manhattan Project answered an analogous question with a specific institutional design — private contractors and university labs doing the actual work, inside a federal security perimeter, with classification rules, cleared personnel, and ultimate government authority over deployment. That model wasn't statist — General Groves and Vannevar Bush were not central planners — but it recognized that some categories of capability cannot sit entirely inside private decision-making. Whether this is the right model for AI is contested — but the question itself is unavoidable.

If you believe in AI superintelligence, many policies have become more urgent:

We need to be more serious about China’s ability to use American compute. Every AI chip that can train or run a Mythos-like model is more of a national security threat than before, and this will only continue. It will matter whether China can run 1000 or 100,000 advanced hacker AIs and the main way to stay ahead will be in compute advantage. Mythos was trained on an amount of compute that is currently not attainable to China via domestic manufacturing. If China were to train a Mythos-like model this year, it would be off of compute that is legally purchased from Nvidia, compute that is legally rented offshore, or compute that is smuggled. We need to better control semiconductor manufacturing equipment, prevent smuggling of chips, look again at what compute should be legal to sell to China and in what quantities, and ensure that the US maintains an advantage.

We need to ensure that China, or other adversaries, cannot steal and misuse the Mythos model weights. It doesn’t make sense to try to maintain a lead over China if China can just steal our best results. Having more total compute will still give us an advantage, but our advantage would be even stronger if China couldn’t steal our model as well. Unfortunately, security at major US AI companies is not yet up to the task, and the task is tremendously difficult. If China were able to steal and misuse Mythos, that would already be a big deal. I suspect they will definitely try, and even if they don’t succeed at first, they will eventually. The US government needs to assist AI companies in helping them lock down their security.

We need to consider what level of government oversight there should be on these increasingly powerful AI capabilities. Congress, not agencies and not private boards, should define what happens at the upper end of the capability curve. At some point, decisions about deploying systems that rival the coercive capacity of governments cannot sit inside a private corporate structure, however well-intentioned its leadership. Under the Constitution, that authority belongs to Congress. Statute, with sunset clauses and judicial review, is how this gets done — ideally without creating a sprawling discretionary regulator.

We need a government body with the technical capacity to issue binding safety regulations on frontier AI companies. Drugs are regulated by the Food and Drug Administration, airplanes by the Federal Aviation Administration, and nuclear by the National Nuclear Security Administration. Drugs, airplanes, and nuclear are not perfect analogies for AI, but each combines promise with peril that needs to be carefully balanced. The FDA, FAA, and NNSA are not perfect regulatory bodies either — the FDA in particular arguably shows the drawbacks of how government regulation can overly harm and slow the benefits of innovation. AI superintelligence will be far more potentially beneficial but also far, far more dangerous than any drug or airplane. Right now the US has the Center for AI Standards and Innovation, but everything it does is voluntary and it is far under-resourced for what will need to be done. We need a narrow-mandate body solely motivated by national security and solely targeting only the most with the proper resources and technical skill to react quickly to superintelligence.

We must also recognize that the AI race with China may be a race to see who loses control first. From a position of strength, we must consider negotiating mutual agreements on safe development. In order to do this, we will need better ideas about what safe development looks like and verification infrastructure to enforce a deal. In the late 1950s and early 1960s, the US, UK, and Soviet Union negotiated limits on nuclear testing but couldn't reliably detect underground tests — so the 1963 Limited Test Ban Treaty covered only atmosphere, underwater, and space. By the time verification technology improved, the treaty was already signed and wasn't substantively revisited until three decades later. This lesson is instructive – we must invest in AI verification before we need it. Any future US-China agreement on AI development requires verification infrastructure that doesn’t yet exist. Without it, deals require trusting China not to defect. With it, deals become enforceable through technical means rather than faith.

We need a plan in case we want to slow down. Finally, the government should have contingency plans and insurance policies for scenarios in which the technical community concludes that further capability gains, on current methods, are outrunning our ability to control the resulting systems. This is not a recommendation to slow down now, but it would be prudent to know what slowing down would actually require and to have the option — before the moment arrives and we realize we have no brakes.

Looking forward

The national security implications of Mythos-like models are clear. But the stakes of AI superintelligence would be orders of magnitude higher. The point is not that Mythos will go rogue. The concern is that AI 10 more iterations above Mythos could go rogue… and Mythos illustrates, perhaps for the first time, how a superintelligent AI going rogue would actually pose a big deal for national security.

From Mythos, it is a straight shot in just a year or two to AI systems that are going to be far too strong to ignore. And from there, it won’t be long to superintelligence. It would be better to have a prepared government that already has practice getting things right, rather than a government rushing to the scene after it’s already too late.

I think this is a bit overstated:

- Mythos cyberoffense capability probably does not rival that of nation states (who actually invest in maintaining a cyberoffense team). My mental model for a while has been that AI's comparative advantage in cyber offense is in scaling attacks that do not depend on high degree reliability or coordination across multiple attack vectors—i.e., ransomware attacks, not top tier nation state hacks. SL3 in RAND parlance.

- you seem to be doing the "generalize from one capability to many others" mental motion, but I think a fairly standard "capabilities are jagged" + "depending on easy to verify/hard to verify tasks" model would predict both the great gains in cyber + that models will improve much more slowly at eg strategy.

- on superintelligence, I'm starting to desire this definition to be broken down more, since the further we progress the more it suffers from the same issues as AGI. Currently it can be basically used to imply any level of capabilities desired, even those dependent on accumulating vast resources.

- "Overnight, every intelligence community operation that depends on signals exploitation is potentially compromised." seems vastly overstated? I think it would have broadly been fine, if a bit bumpy, if Mythos-level models are public.

- Agree on the need for govt preparation and to prevent model weight theft!

Excellent thoughts!

Something I'm not hearing discussed much is what state actors are doing with their current zero-day stockpiles.

I would expect that as soon as they saw the exploit stats, and that glasswing was too large a footprint of people to persuade to leave their zero-days unpatched, they would start using them to achieve goals, even if inefficient, as quickly as possible.

Presumably, every day more of the vulnerabilities identified by mythos are being patched, and nation states have little way of knowing if mythos will uncover an exploit they paid 800k for, so it's quickly a use it or lose it situation. I'm sure some will be kept in reserve, but it's a gamble. So stockpiles are likely being deployed now in ways and for goals which potentially won't become public for many years.

The NSA must very upset about mythos, especially given the WH-anthropic feud. Mythos also reduces the extent to which the NSA can maintain a capabilities lead through recruiting top cyber talent.