Should the US do a Manhattan Project for AGI?

Such a Project is neither inevitable nor a good idea

This article was written by Oscar Delaney, Bill Anderson-Samways, and Peter Wildeford. It does not necessarily represent the opinion of the entire staff at the Institute for AI Policy and Strategy.

~

The idea of a government-led program to “win the race” with China to Artificial General Intelligence (AGI) has gone mainstream. From Congressional commissions to government agencies to AI company CEOs, high profile calls for a Manhattan Project-style effort are growing. Leopold Aschenbrenner’s popular essay Situational Awareness predicted “some form of government AGI project” by 2027-2028. Meanwhile, others remain skeptical.

How likely is this really? What would it actually look like? And most importantly, is it even a good idea? Our new forecasting research suggests the answers are far from certain. Professional forecasters estimate just a 34% probability of a government-led AGI program — neither inevitable nor impossible. More importantly, treating a government project as inevitable could trigger the very risks we’re trying to avoid.

Forecasters Say a US-Led AGI Project Is Far From Inevitable

We at the Institute for AI Policy and Strategy ran a structured forecasting workshop with professional forecasters and US AI policy experts to estimate the probability of a US government-led project building the first AGI-level system.

We defined “AIR-10” as an AI system that accelerates AI R&D progress tenfold— essentially compressing a decade of AI development into a year. This matches what many consider the threshold for transformative AI that could trigger recursive self-improvement, and is the threshold Aschenbrenner uses for AGI.

The aim was to forecast the likelihood that AIR-10 is developed via a “government-led project.” By this, we meant that the government both decides when to start/stop developing the AI model AND acquires the final model to deploy as it sees fit. This is more stringent than just the government doing regulation or oversight. In this definition, the government takes direct leadership and command over key development and deployment decisions. However, the private sector may still be heavily involved, for example in a government-led public-private partnership.

Some commentators (e.g. Aschenbrenner) have suggested that a project run by the US government to achieve AGI is inevitable as a result of the geopolitical importance of AGI. Our results might surprise those who see it as inevitable — the median forecast was a 34% chance.

Furthermore, there was a lot of uncertainty among forecasters in our panel, with an average range of 11% to 61%. This wide spread shows that even experts who study this closely are deeply uncertain. Anyone claiming to know for sure whether the government will or won’t lead AGI development is likely overconfident. We need to prepare for multiple scenarios, not just assume one particular future.

History Offers a Messy Guide, Not Clear Predictions

Those predicting a government AGI project often invoke historical analogies — the Manhattan Project, the Apollo Program, and even ARPANET. But when we systematically analyzed 35 past US technological innovations, the picture that emerged was more nuanced.

What’s the correct historical comparison? Whether the government leads depends heavily on what kind of technology you think AGI is:

Megaprojects costing >0.1% of GDP (like the Interstate Highway System)… these projects were government-led 78% of the time in the past

Ambitious STEM projects (like the atomic bomb)… Government-led 63% of the time

Dual-use technologies with both beneficial and harmful applications (like synthetic virology)… Government-led 57% of the time

General-purpose technologies (like the airplane)… Government-led only 40% of the time

Past AI breakthroughs (like the transformer)… Government-led only 23% of the time

AGI has features of all these categories, which is why the historical precedent is hard to pin down. AGI is a general-purpose technology with massive economic potential, in a field that has to date been private-led thus suggesting a high likelihood of continued private leadership. AGI is also a dual-use technology with profound national security and geopolitical implications, and potentially requires megaproject-scale resources beyond what private companies can muster, all factors pointing to government involvement.

We presented our forecasters with this data during the workshop described above. As a result, they updated their forecasts a little, but not much — probably reflecting the significant ambiguity in the historical precedents.

National Security and the China Factor

The single most powerful factor in our forecasters’ estimation that could compel government action is a perceived military-technological threat from China. If the US believes it’s on the verge of losing its strategic advantage, political will for a government-led project could materialize overnight.

Nascent versions of this fear already exist. The US-China Economic and Security Review Commission has already called for a Manhattan Project for AGI in its 2024 report to Congress. The narrative of an AI arms race with China has become deeply embedded in Washington.

Importantly, national security concerns both make government involvement more likely and shape what form that involvement might take. An AGI project driven by military imperatives would look very different from one focused on economic competitiveness or scientific advancement.

AI’s Breakneck Pace vs Government’s Glacial Speed

However, the US government is not historically known as being fast to develop technology — especially compared to the speed of AI. By the time the government decides to become more involved in AGI development, private AI companies might have already developed it.

This creates a timing problem. The AIR-10 threshold we defined, while transformative, might not be “attention-grabbing” enough to trigger government action to achieve it. This is unlike nuclear weapons, where the ability to clearly and unambiguously destroy an entire city constituted a significant, discrete, and noticeable threshold that was salient to policymakers even before it was achieved.

Several of our forecasters emphasized this dynamic — if AIR-10 arrives in the next few years (as some predict), that might be too soon for the US government to organize a successful intervention. Only if the path to transformative AI stretches out over a longer timeline does government leadership become more likely. When providing their central 34% estimate, forecasters were asked to condition on AIR-10 arriving at some point before 2035.

Less ‘Manhattan Project’, more ‘Apollo Program’ / ‘Operation Warp Speed’

Additionally, the “AI Manhattan Project” framing is potentially misleading. When people use this term, they often imagine a single, secret government AI lab that builds AGI entirely from scratch. However, according to our forecasters, it’s only about 3% likely that AIR-10 will be developed specifically in this way. Other options involving major government in-house resources, nationalization of an existing private company, or otherwise using legal compulsion to force the development of AGI under US government control were also considered similarly unlikely.

Based on our analysis, if the government does lead AGI development, we forecast the project would most likely take one of these forms:

Government-Led Consortium (14% probability): This is the “Apollo Program” model. In this model, the US government wouldn’t build AGI itself but would coordinate multiple private companies (and maybe even government labs too, though relying mainly on government labs seems unlikely). For example, NASA managed contractors like Boeing and North American Aviation to do the moon landing. This avoids “picking winners” and leverages existing private sector talent.

Single Private Contractor (9% probability): This is the “ENIAC” model. In this model, the government contracts with one specific company to build AGI to government specifications. This is faster to implement but somewhat riskier, as it involves picking a particular company to be the national champion.

The original Manhattan Project itself was actually closer to a government-led consortium than is widely thought, with multiple government labs and private contractors involved—though it was much more government-centric than what our forecasters envision for AI. The variety of possible models matters because each comes with different tradeoffs in terms of speed, security, and innovation.

From Commercial Competition to an AI Arms Race

The consequences of getting a government-led AGI project wrong would be severe. A poorly designed government project could trigger the very catastrophes it aims to prevent.

Currently, AI development is a commercial competition. While intense, it allows for some safety investment and shared research. A formal, government-led US project would upend this dynamic, sending an unmistakable signal to the world — especially China — that America is seeking a decisive strategic advantage.

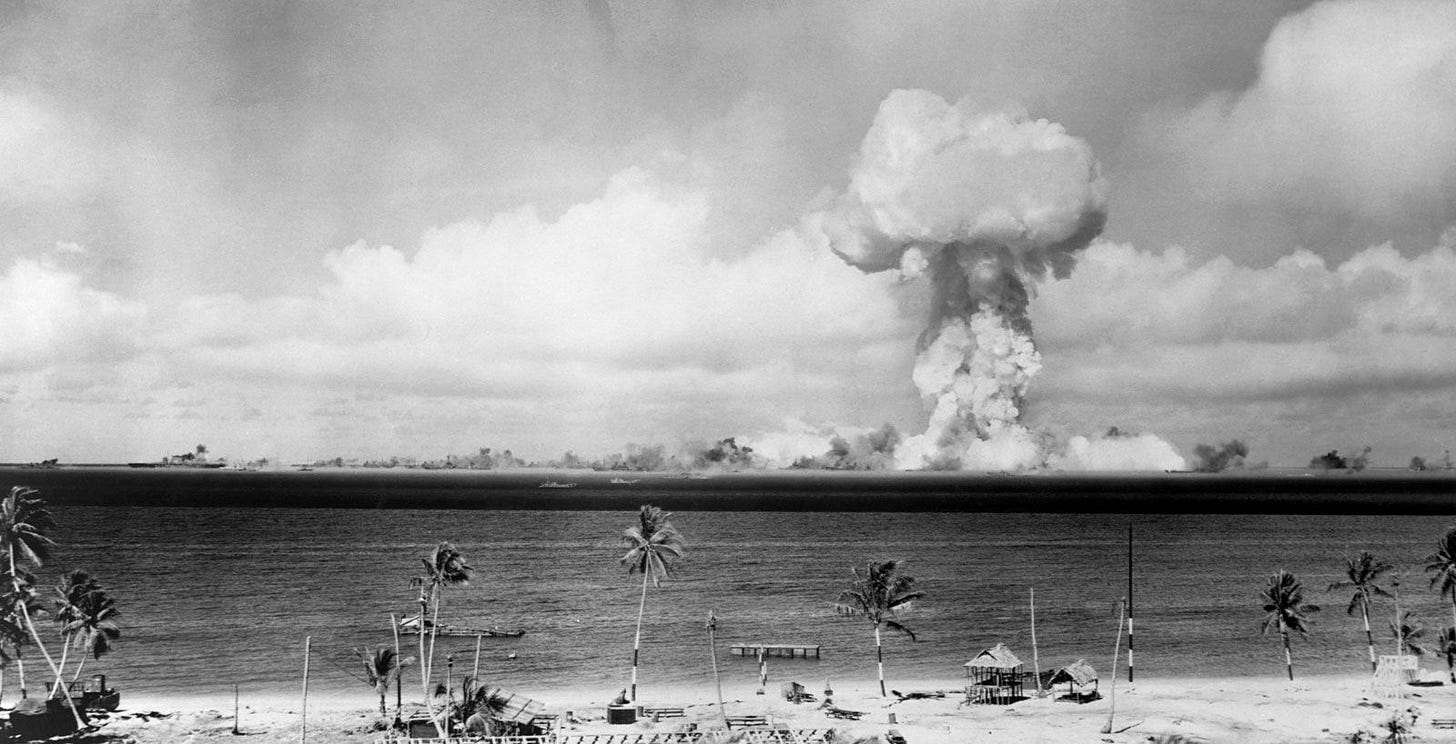

The response would be swift and predictable. A rival government may feel forced to launch its own centralized, government-led program, transforming a commercial race into a direct military-technological showdown. History supports this concern — the Manhattan Project triggered a nuclear arms race that saw the Soviet Union detonate its own test bomb just four years later.

Unlike with the Manhattan Project, however, the key stage of an AI race could unfold in mere months, with even less room for safety compromises under the pressures of national security. That could heighten the risk of a catastrophic accident arising from AI systems due to hasty deployment.

This dynamic could be mitigated if multiple companies join the project, which would at least nullify the commercial race. However, according to our forecasters, this is unlikely to happen — they think there is less than a 50% chance that a government-led project would involve more than AI company, primarily due to the coordination costs involved in such programs.

In some circumstances, the AI arms race caused by a government-led AGI project could even trigger a great power war. As noted above, a US project could be perceived as an attempt to achieve a decisive strategic advantage unparalleled since the advent of nuclear weapons. In such circumstances, a rival might also consider escalatory actions, such as a cyberattack on data centers or even threats of missile strikes, which could then escalate into an all-out war.

Concentrated AI Power Threatens Democratic Legitimacy

While the risk of international conflict is terrifying, a centralized AGI project also threatens the erosion of democratic legitimacy.

The American system is built on Jeffersonian checks and balances, pitting ambition against ambition to prevent any one person or group from accumulating concentrated power. A secretive AGI project, led by executive branch agencies, would greatly concentrate the unprecedented power that AGI would bring. Even if this did not pose a direct threat to democracy, it would certainly erode democratic trust and legitimacy.

Some might argue that, without a government-backed AGI program, rival governments are more likely to beat the US to AGI. This would concentrate power in adversaries’ own, far less democratic political systems. However, this argument relies on the inaccurate idea that adversaries already possess centralized AGI programs which could allow them to overcome US companies’ current lead. Though the current pace of Chinese AI development is certainly concerning, open-source information indicates that China has not centralized its compute or researchers into such a program. Thus, US companies retain a considerable advantage. In fact, a US government-led program could trigger rival governments to launch their own centralized projects, paradoxically potentially reducing US lead-time.

Concentrated government power is not the only concern. A government-led AGI program could also concentrate power in the hands of tech companies. If such a program involved multiple AI companies, it would essentially eliminate market competition — creating a cartel with access to enormous amounts of economic and military power. Such a situation has not occurred since the apex of the British East India Company, which in the 1700s came to account for half the world’s trade and rivaled the military might of the great powers. Even if only a single company was involved in the project and market competition was preserved, giving a private company access to the government resources needed to develop decisive military capabilities would be unprecedented.

The Security Benefits Don’t Require Building AGI

The strongest argument for a government project is compelling — namely, better security. Private AI companies are vulnerable to infiltration and theft, especially from well-resourced governments. A government project could implement military-grade security, reducing risks of AI being stolen by adversaries.

This is a real concern that deserves serious attention. But you don’t need to build AGI to secure it. Instead, security benefits can be achieved through more targeted, less escalatory policies such as:

Mandatory security standards for private AI companies handling very advanced AI systems

Government partnerships to assist companies with securing model weights without taking over development

Enhanced counterintelligence support for private AI companies

The government doesn’t need to build all nuclear reactors to ensure nuclear security. It sets standards, monitors compliance, and provides security assistance while letting private companies operate the reactors. These targeted interventions could achieve 80% of the security benefits with 20% of the risks. They avoid triggering international arms races or concentrating power dangerously. Most importantly, they can be implemented incrementally and adjusted as we learn more about AI risks.

Preparing for Multiple Futures, Not Sleepwalking into Disaster

The future is genuinely uncertain. Our research shows that a government-led AGI project is neither inevitable nor impossible.

This uncertainty tells us something important about how to approach AI governance. Rather than assuming a single trajectory and optimizing for it, we need robust strategies that work across multiple scenarios.

That means avoiding self-fulfilling prophecies. Treating a government project as inevitable could trigger the very international dynamics we’re trying to avoid. But dismissing it as impossible leaves us unprepared if geopolitical pressures suddenly shift.

We need a portfolio of approaches that work across different government involvement levels:

Strengthen private AI security: Improve security protocols and infrastructure at the private AI labs, to prevent theft of American IP.

Track adversaries’ AI progress: Track reliable indicators that would enable the US government to catch adversaries launching their own government-led programs, such as compute and researcher centralization.

Build government readiness: Develop in-house expertise and plans in case government leadership does become necessary.

Most importantly, we must remember that government involvement isn’t binary. There’s a spectrum from light-touch regulation to full nationalization, with many points in between. The goal should be finding the minimum effective dose — enough government involvement to ensure safety and security, but not so much that we trigger the very catastrophes we’re trying to prevent.

The stakes are too high for ideological purity. Whether you’re a tech accelerationist who believes in private innovation or a national security hawk worried about China, we all share an interest in navigating this transition without triggering catastrophic accidents, conflict, or concentration of power. That requires taking uncertainty seriously and preparing for multiple futures, rather than assuming we know which one will unfold.

Great post! Thank you for your thoughtful takes. I'm glad to see Bill's systematic reference-class analysis getting more publicity :)

Got many good updates from this article - great work!