The Pentagon's War on Anthropic

The Pentagon has a legitimate principle, and a terrible strategy for enforcing it

Anthropic’s Claude is the only frontier AI system known to be operating on Pentagon’s classified networks. Claude arguably helped capture Maduro.

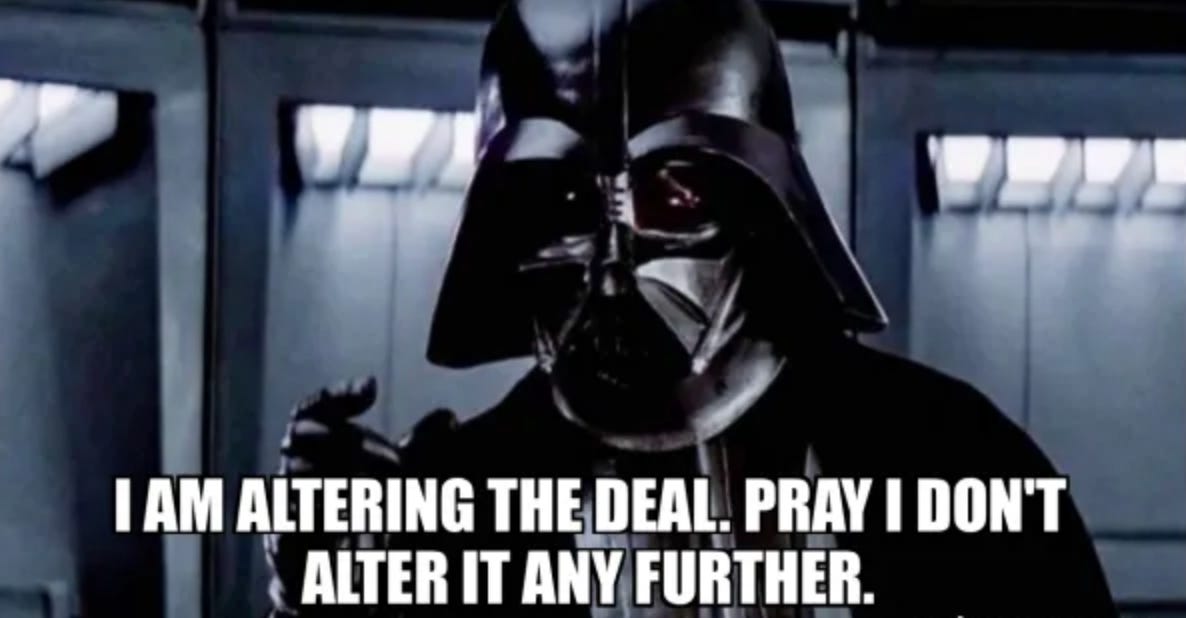

But the Department of War wants more. On Tuesday, Secretary of War Pete Hegseth summoned Anthropic CEO Dario Amodei to the Pentagon and gave him until 5:01 PM today to grant the military fully unrestricted access to Claude. Additionally, Hegseth threatened to not only terminate its $200 million contract but gave two additional threats — that he would designate Anthropic a “supply chain risk,” or that he would invoke the Defense Production Act to legally compel Anthropic’s compliance.

A “supply chain risk” designation is normally only used for Chinese equipment like Huawei and isn’t even yet used against Chinese AI like DeepSeek, and would ban Anthropic from doing business with other Department contractors, which is a major loss of business.

Defense Production Act usage, normally reserved only for critical national security emergencies, would suggest Anthropic is the exact opposite of a “supply chain risk” — deeming Anthropic so essential to the national defense as to allow the government to compel Anthropic to produce even if they don’t want to.

Anthropic refused yesterday night, saying “We cannot in good conscience accede to their request.” We will now see if Hegseth follows through on his threats.

Why is this happening? Why has the Department gone to war over the model they’ve been relying on?

Much of this dispute is playing out in classified settings, and the public details are a mixture of facts and leak-based PR campaigns from both sides designed to shape the debate. But what we do know is concerning enough to take seriously. Some have framed this as a fight over “woke AI”, accusing Anthropic of overstepping and backseat driving the Department. It isn’t.

What the Pentagon Wants

To understand how we got here, it helps to understand a different case. In 2017, the Department launched Project Maven — officially the Algorithmic Warfare Cross-Functional Team — to use machine learning to analyze drone surveillance footage. The military was collecting far more full-motion video from drones than human analysts could process, and it needed AI to help sort through the data, identify objects of interest, and flag patterns that analysts could then review.

But in 2018, thousands of Google employees signed a petition protesting their company’s involvement. Google pulled out. The damage was real and lasting. Analysts who had been promised AI tools to process overwhelming volumes of drone footage were left without them. Programs that had taken months to scope and staff had to start over. The episode left a justified conviction that Silicon Valley would abandon the warfighter the moment it became politically inconvenient.

So the Pentagon’s frustration might seem straightforward. According to the Pentagon, Anthropic must agree to let the military use Claude for “all lawful purposes” without restriction. The Department’s position is that the military’s use of technology should only ever be constrained by the Constitution and the laws of the United States — not by a private company’s acceptable use policy.

This is an entirely defensible principle. Raytheon does not tell the Pentagon which targets to hit with their missiles and Lockheed Martin does not tell the Pentagon which missions to fly with their planes. The military's authority to determine how it employs its tools is fundamental to civilian control of the armed forces. If every defense contractor could carve out moral vetoes over specific use cases, the result would be a patchwork of private restrictions that collectively let corporate boards shape military doctrine. That precedent problem is real and serious regardless of whether you think Anthropic's specific restrictions are reasonable. Thus Pentagon's desire for unrestricted access may not be mere bureaucratic stubbornness but also a real operational principle that tools in theater need to be predictable and fully available.

But that principle runs into a few problems — one of bad incentives, one of avoiding known technical limitations make certain uses unreliable regardless of what the contract says, and one of being entirely disproportionate in reacting to how a contract negotiation should work. And that's where these operational principles and Maven analogies break down in practice.

What Anthropic Wants

Put simply, Anthropic is not walking away from national security use. Claude is now integrated into Palantir's MAVEN Smart System — the successor to the very infrastructure Google abandoned. Anthropic was the first to provide custom models for national security customers. Claude is used across the Department for intelligence analysis, operational planning, cyber operations, and modeling and simulation. Anthropic has never raised objections to any particular military operation and never attempted to limit use of its technology on an ad hoc basis.

But when writing this contract with the Pentagon, Anthropic drew exactly two lines upfront —

First, Anthropic wanted no mass domestic surveillance of Americans. Anthropic supports the use of AI for lawful foreign intelligence and counterintelligence. But according to Anthropic, mass domestic surveillance is a different category. The law hasn’t yet been updated for how easily Claude or other AI can fuse scattered, individually innocuous data into a comprehensive picture of any person’s life — automatically and for millions of people simultaneously. This ends up a potentially large risk to liberty that should make any American nervous. “All lawful purposes” becomes a much bigger opening when AI radically expands what is technically feasible within existing legal gray areas that haven’t been updated for the age of AI.

Second, Anthropic asked for no fully autonomous lethal weapons without human oversight. This is because we don’t yet have “military-grade AI” — AI systems do not reliably execute commander’s intent and maintain chain-of-command fidelity under contested operational conditions. Current commercially available AI is too error-prone for effective warfighting and remains vulnerable to sabotage by adversaries — failures that could cause the United States to lose engagements and put warfighters at risk.

The Pentagon originally said yes to both of these principles. Then it reversed — and neither side has publicly explained why. Whether this was driven by a specific operational need that hit the restrictions, by political pressure from the administration, or by something else entirely matters enormously for how to interpret this dispute. But whatever the reason, in a normal contracting relationship, a change in requirements leads to contract termination, not coercion. The use of the “supply chain risk” designation and/or the DPA are unprecedented strongarm tactics normally reserved for combatting Chinese technology and times of acute national security crisis respectively — and they risk undermining the very partnerships the Pentagon needs.

Think about the incentives

This administration has positioned itself as pro-innovation and anti-overreach on AI. The AI Action Plan emphasized that American competitiveness depends on letting the private sector lead. That is the right posture for a country in a technology race with China.

But using the Defense Production Act to compel an AI company to retrain its models without safeguards, or designating a domestic AI champion as a supply chain risk over a contract disagreement, cuts directly against that posture. It is the biggest potential regulatory overreach in the AI space of any administration to date.

And the incentives are terrible. Any AI company watching this learns that Pentagon contracts can be renegotiated at any time for any reason, and that provisions the Pentagon agreed to — even ones that clearly uphold existing law and Department directives — could become the basis to punish you. The rational response is to never get on classified networks in the first place. Do what Google did with Maven and walk away.

What Should Happen

When OpenAI, Google, and xAI signed “all lawful purposes” agreements with the Pentagon, none of them were operating on classified networks under real operational pressure. It’s easy to agree to everything when the question is hypothetical and you want the business. Anthropic was the first to hit the hard problems because it was the first actually doing the work.

If the Pentagon doesn’t like the contract anymore, it should terminate it. Anthropic has the right to say no, and the Pentagon has the right to walk away. That’s how contracting works. The supply chain risk designation and DPA threats should come off the table — they are disproportionate, likely illegal, and strategically counterproductive.

But termination doesn’t solve the underlying problem: there is no legal framework governing how AI should be used in military operations. Right now the rules are being set through a combination of corporate acceptable use policies and Pentagon ultimatums, and that is not going to hold. Boeing’s flight control software is tested against known failure modes and certified to specific tolerances. LLMs are probabilistic and opaque. When Claude is used for intelligence analysis today, a human analyst reviews the output and applies judgment — and that’s working. Fully autonomous use near the kill chain is a different category of risk, and every frontier AI company in the world knows their models aren’t ready for it, whether they’ll say so publicly or not.

Congress needs to set the rules here: reliability standards for AI in operational contexts, incident reporting for AI-enabled systems, oversight for autonomous weapons, and statutory limits on AI-powered domestic surveillance. Congress should also fund the development of military-grade AI — systems that can execute commander’s intent under contested conditions, resist adversarial manipulation, and maintain positive command authority. DARPA has already made progress on this, but it needs dedicated funding and real coordination with frontier labs. No one has solved the reliability problem alone.

The warfighters who will depend on these systems deserve better than a contract dispute playing out over media leaks. And the public deserves a say in whether AI is used to surveil them — through their elected representatives, not behind closed doors.

Good summary, but I was hoping to see your outcome probabilities (DoW cancels contract, DoW labels Anthropic a supply chain risk, DoW uses Defense Production Act, Anthropic is so badly damaged it has to shut down, etc). You're one of the best in the world at this! A forecast would make your article stand out from all the other summaries of this situation.

Congress hasn't passed a single binding law on military AI. Why? The AI industry spent $125 million on the 2026 midterms and the number of organizations lobbying on AI went from 6 in 2016 to over 450 in 2025. The absence isn't an oversight.